The extensive influence of ancient Gnosticism on the Matrix movies is by now well known. Gnosticism itself was heavily influenced by Platonism, and I believe Plato provides an even closer analogue to the Matrix than Gnosticism does at certain crucial points. I am thinking of Plato’s Allegory of the Cave. Let’s refresh our memories. Plato asks us to picture a small community dwelling in a huge cavern. The troglodytes have been born and raised there and do not even suspect the existence of the outer, surface, sunlit realm. Reality as they know it is pretty much two-dimensional. You see, each individual is bound firmly in place, head braced to face forward. They can converse with adjacent voices, but all they ever see is a sequence of shadows passing before them, projected from behind them by other individuals who hold up plywood cut-outs of men, women, animals, etc. The prisoners of the Cave see nothing amiss in all this, but one day one man somehow breaks free, moves his stiff neck from side to side, and is amazed to view the true situation!

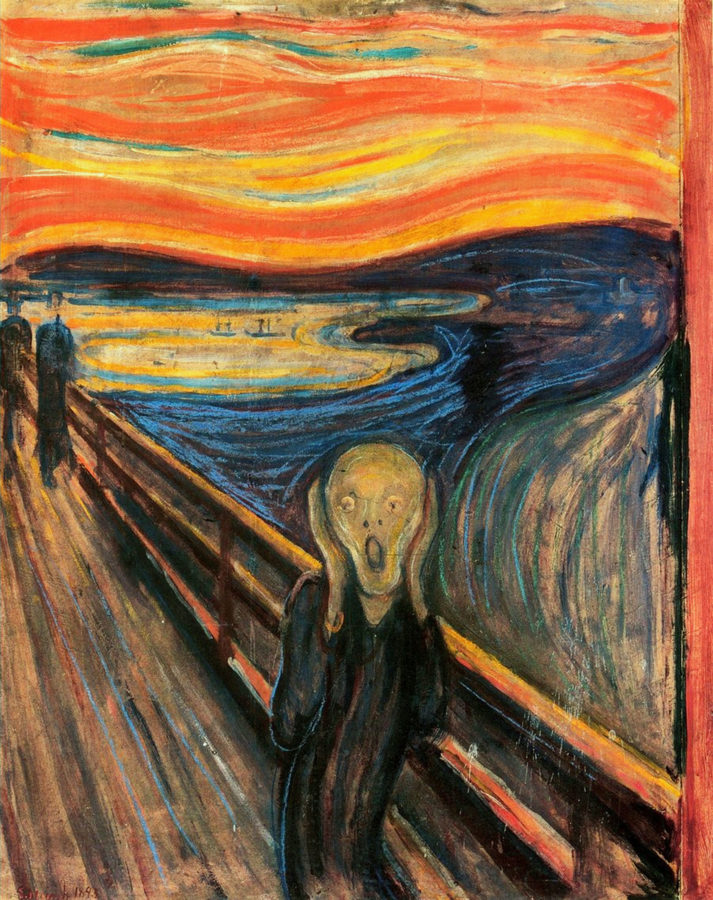

He manages to escape the Cave, stumbling up the tunnel and into the blinding noonday sunlight. For a time he can see nothing, less than he could see down below. But his eyes soon adjust, and he looks about him in wide-eyed wonder and astonishment! He beholds the wonder of the world of living and substantial objects that you and I take for granted every day. Have you ever seen those videos in which a person, usually a child, congenitally blind or deaf, is fitted with some new device to supplement his senses? At once he or she is beaming with joy! And so is Plato’s escapee. For the first time, he sees the real world. What he had seen previously he now recognizes as a false world of shadows, dim, flat effigies of things in the surface world.

“I can’t keep this to myself!†he reflects, resolving to return to the Cave long enough to tell the dwellers what has happened to him and to reveal that a more real world awaits outside. (This is Morpheus in The Matrix.) Picture him making the descent and interposing himself between the cut-out bearers and their audience, proclaiming his gospel. And picture his chagrin at the jeering and abuse he receives from those he sought to liberate! The guardians of the Cave, whoever they may be, need not trouble themselves to seize or silence him. Their prisoners, who do not even know themselves to be prisoners, drive him forth, back to the surface world whose existence they refuse to acknowledge.

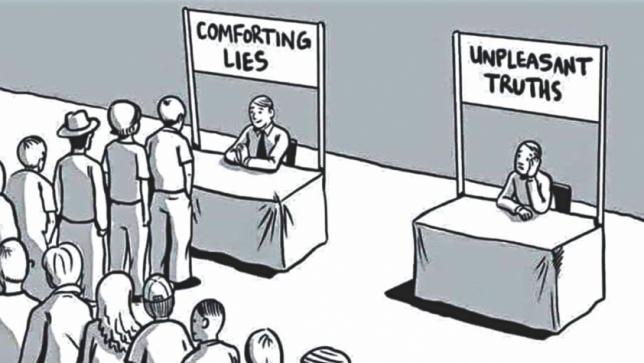

The message of Plato is one of epistemology: what do we really know, how do we know it, and how can we be sure we know it? The philosopher was of course not saying there is an actual Cave filled with captives needing to be rescued, as in some recent news stories. Rather, he was metaphorically diagnosing the general condition of humanity. People think they know the truth about the world and what it contains, but if they are correct about this or that, it is no more than a lucky guess. People are content to rely upon inherited beliefs, assimilating the groundless opinions of the people around them. These are but a poor and unreliable guide. Worse yet, people mistake mere imaginings for reality and guide their lives by them. Plato believed that the path of escape from this cognitive Cave was the discipline of abstract thinking. Especially the study of mathematics would suitably train the mind to discern the true from the false, to arrive at logical certainty. In the Allegory this is pictured by the harsh but welcome revelation of sunlight and all that it illumines. Thus enlightened, one comes to understand that all things in this world are imperfect and impermanent copies of higher Forms or Ideals which are not subject to instability and decay, treasure in heaven where neither moth nor rust corrupt.

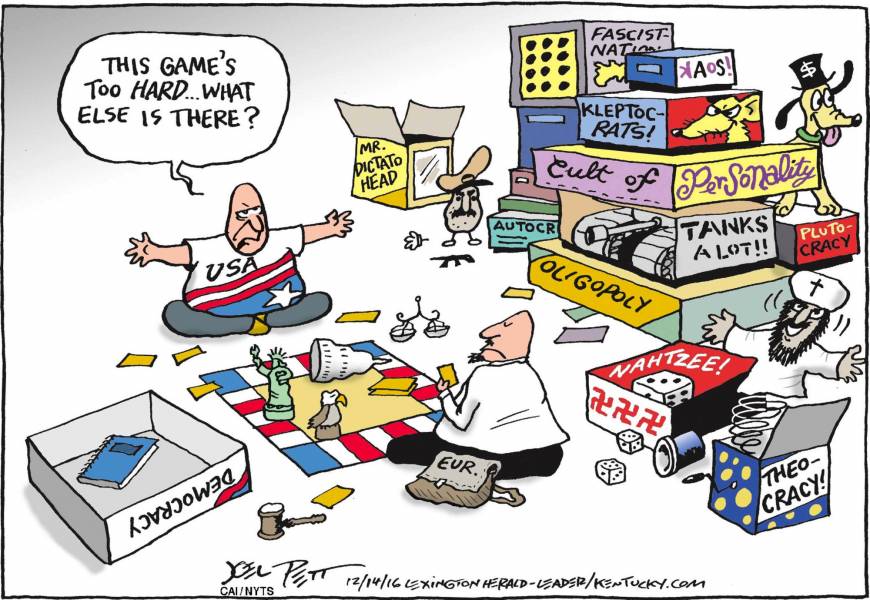

Was not Plato’s Cave scenario a pessimistic one? After all, the refugee from the shadows fails in his attempt to free the others. Plato did not imagine the general run of humanity would ever be able or willing to gain enlightenment. His equally brilliant protégé Aristotle similarly observed that “It is beyond the capacity of most people to draw distinctions†between truth and error. It is essentially a Gnostic position: renouncing naïve hopes as to the education or redemption of the common man, preoccupied as he is with the pleasures and necessities of life (Mark 4:18-19). Applying this understanding to politics, this is why Plato disdained democracy as mere mob rule. Or as E.K. Hornbeck (the H.L. Mencken analogue in Inherit the Wind) exclaimed, as he pointed to a howling rabble outside the window: “Those are the slobs and boobs who make our laws.†Thus Plato advocated for rule by elite philosopher-kings, those who had made the ascent above and beyond “common sense,†too often common nonsense, to the celestial arcana of pure reason and superior insight into all things.

And here is a terrible irony. How do the philosopher-kings know that they know that/what they know? Why, by direct apprehension of the Truth, of course. Like Descartes, who deemed it sufficient to recognize as truth whatever seemed “clear and distinct†to the rational mind. But that is a snare and a delusion. It is what Derrida called Presence Metaphysics, the groundless notion that there can be intuitive (virtually telepathic) direct apprehension. To think that way is to ignore the seams, the sedimentary strata, the fingerprints, the signs of derivation and composition of what seems self-evident. Haven’t you been willing to stake everything on the truth of something you subsequently discovered, however reluctantly, was a mistake? “How could I have been so wrong?†“What the hell was I thinking?†You might have expected a student of Socrates to have possessed a bit of Socratic humility, or in other words, that “Now we see in a glass darkly.†Â

Think again of the Allegorical Cave. I mentioned the guardians of the Cave, whoever it was who kept everyone in chains, saw to their feeding, carried the cut-outs across the stage. Why did they do it? Why did they think it worthwhile to trick and deceive their victims? They must have thought it was good for them! Who knew what trouble these poor souls might get into out there in the sunshine world above, confused by the blinding light with its kaleidoscopic colors? Didn’t Plato see that the underground community lived under well-meaning philosopher-kings who wanted only the best for them? Obviously he didn’t. He didn’t have the perspective of history that we have. The leaders of the Aryan Super Race, the Vanguard of the Proletariat, the Grand Inquisitor, and countless others were as completely sure of their oppressive doctrines as any Platonic sovereign.

So is democracy the shining alternative? Winston Churchill seems to have been right in saying that democracy is the worst form of government—except for all the others. One thing’s for sure: “There ain’t no philosopher-kings in this locality.â€

As long as it is run by mediocre human beings, government will be terribly flawed. We can make corrections, or say we have, but there is no real reason to think it will ever become some sort of Star Trek Utopia. So we need to come to a meaningful way of understanding what we have, with all its frustrating limitations. And here I want to switch to another ancient model that takes this condition seriously and, I think, profoundly. It is the biblical myth of the Principalities and Powers. It is perhaps the perfect example of Bultmann’s demythologizing.

It begins with the transition from Israelite polytheism to monotheism, a development I place (much later than most) in the mid-second century BCE, part of the vaunted Deuteronomic Reform. What were they supposed to do with the seventy or so obsolete deities whom Jews had formerly worshipped? Well, first they said these were the sons of God, who appointed a fiefdom for each one to rule, to be in charge of answering prayers, defending his people in war, etc. But if there was to be but a single God, these lesser beings had to be redefined, but how? When, centuries later, Christianity displaced pagan polytheism, Christians stigmatized Apollo, Mithras, and the rest as demons pure and simple, fallen angels who had rebelled against God, and with predictable results. The Deuteronomists took basically the same path, making the sons of God into fallen angels, too, but with a fascinating difference. These Fallen Ones still ruled their allotted nations. They were still (at least for the present) in charge—and, again, with predictable results! This was the Jewish explanation for evil and oppression in the world, much of the brunt of which Jews steadfastly bore.

But even when not suffering outright persecution by Gentile regimes, Jews maintained a fragile coexistence with the pagan authorities whose subjects they were. For instance, the Jewish priesthood collected Roman taxes at the Jerusalem Temple and even offered daily sacrifices on Caesar’s behalf. It was the cessation of these offerings that brought on the Roman-Jewish War. This ongoing situation was expressed in the myth of the Principalities and Powers. Jews believed that the pagan empires were clients of the Fallen Angels. The gospels depict demons as “unclean spirits,†roaming “lone wolf†devils afflicting random schmucks with insanity and epilepsy. But the epistles speak rather of the Big Bosses: the Powers behind the thrones of the nations.

Jews (and then Christians, too) believed that one day God would clean house, eject these Cosmic Gangsters, and assume direct control of the world. But until then, let’s face it, pagan rule was better than none at all. Would you rather have chaos? The War of All Against All? Modern Bible-derived mythology gives us a good illustration of the difference. You may have seen the three Omen movies in which Damien Thorn is groomed to become the Antichrist. His mission would be to achieve world dictatorship, controlling food supplies in order to force his subjects to worship him and his Father Below. Bad enough, you say? Once Damien is assassinated it only gets worse: in a sequel novel, Gordon McGill’s Omen IV: Armageddon 2000, his son takes over, and he has no interest in ruling the world. Instead he wants to destroy it! Even his Satanist acolytes oppose this!

I’ve always supposed that it was like this in Chicago in the 20s when rival gangs were the real authorities. You couldn’t really feel safe if there was danger at any moment of a roadster rounding the corner filled with Tommy-gun-wielding thugs aiming at their colleagues. But you’d rather live with that than always cowering in the shadows, hiding from nonstop rioters and rapists (like The Purge movies, only 365 days a year). Very bad, but it could be worse! Give me Tony Soprano any day! That’s life under the Principalities and Powers. Will we ever get rid of government corruption and deception? Don’t kid yourself! But remember, the alternative is not Utopia; it’s bloody Chaos. Those who pose as philosopher-kings are only emperors wearing new clothes.

So says Zarathustra. Â Â Â Â Â Â